Pure-tone and Speech Audiometry

The evaluation of all complaints of hearing loss begins with the determination of air- and bone-conduction pure-tone thresholds, the speech recognition threshold (SRT), and word recognition scores. These basic measurements reveal the severity of hearing loss within the tested frequencies and indicate whether the loss is conductive, sensorineural, or mixed. By convention, routine audiometric measurements are made only for the frequencies in the range of 250 to 8,000 Hz, even though normal hearing may encompass a far greater spectrum (20 to 20,000 Hz). Some patients’ complaints of hearing problems and tinnitus may not be diagnosed if the losses occur in frequencies not routinely tested. It is possible to obtain thresholds at frequencies above 8,000 Hz, but specialized equipment and calibration procedures are required.

Speech audiometry refers to measurements of the SRT and word recognition ability. Taken together, these two measures serve as a check on the pure-tone results, help in the diagnosis of retrocochlear problems, and indicate how the hearing loss impacts on the patient’s day-to-day communication. The SRT is the patient’s threshold for spondee words, and it should agree closely with pure-tone thresholds at 500, 1,000, and 2,000 Hz. Word recognition is a suprathreshold test that indicates how well the patient is able to understand speech when it is made loud enough to hear easily. Word recognition is expressed as the percentage of phonetically balanced words correctly recognized at a specified level above the SRT (usually 40 to 50 dB above the SRT). In general, better pure-tone thresholds result in higher word recognition scores.

An essential part of audiometric testing is masking. As a general rule, masking must be used whenever it is possible for the test signal to cross the skull and be heard in the nontest ear. For air-conduction thresholds, this possibility exists when the signal to the test ear exceeds bone conduction sensitivity in the nontest ear by an amount equal to or greater than interaural attenuation. With standard audiometric earphones, attenuation across the skull is 40 dB; with insert phones, the attenuation is 70 to 90 dB, depending on the depth of insertion (

1,

2). For bone conduction testing, interaural attenuation is essentially nonexistent. Therefore the nontest ear must be masked whenever an air bone gap is present. These masking principles apply for both pure-tone and speech audiometry.

Some hearing disorders are characterized by distinctive audiometric configurations, such as the high-frequency notch associated with noise exposure. Other audiometric patterns, such as the degree of symmetry between ears, may determine if additional evaluation of the hearing loss is needed.

Pure-tone and speech audiometry should be done for all patients before and after otologic surgery, unless the patient is unable to cooperate. To allow for adequate healing after surgery, the first postoperative test usually is deferred until about 6 weeks after the operation. If a postoperative complication is suspected, testing is done sooner. Follow-up audiometry may be done as early as 1 to 2 weeks after myringotomy with placement of ventilating tubes and still be indicative of the hearing improvement resulting from the surgery.

Acoustic Immittance Measurements

Acoustic immittance measurements evaluate how well energy flows through the outer and middle ear systems. The basic measurements include tympanometry and acoustic reflexes, both of which are often considered routine parts of an audiologic evaluation. Immittance measurements are sensitive to middle ear problems, and they help to differentiate cochlear from retrocochlear disorders. The measurements are made by delivering a pure-tone signal through a probe that fits snugly in the ear canal. The sound pressure level (SPL) of this “probe tone” is monitored while the air pressure is varied in the external ear canal. Any changes from the normal SPL patterns are related to the functional integrity of the ear.

Several classification systems have been proposed for tympanograms, some descriptive in nature and others that analyze shapes based on multiple probe tone frequencies and resistance and reactance components.

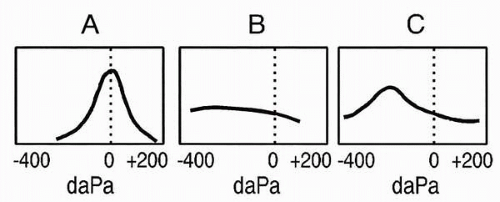

Figure 3.1 shows a commonly used classification that categorizes tympanograms on the basis of shape and tympanometric pressure peak (

3). These basic types are based on adult ears and are associated with a low-frequency probe tone, usually 226 Hz. Type A is a normal tympanogram with peak immittance at or near 0 decaPascals (daPa). Variations of type A include type As, in which the peak is shallower than normal, and type AD, in which the peak is higher than normal. Type As may be found in cases of ossicular fixation, whereas type AD may be found with ossicular discontinuity or tympanic membrane abnormality. The type B, or “flat,” tympanogram depicts very little or no change in immittance with variation in air pressure and is found in cases of middle ear effusion. The type C tympanogram has a negative peak, indicating negative air pressure in the middle ear space.

Tympanometry is ordered when there is a question about the mobility of the tympanic membrane, the air pressure within the middle ear space, or the status of the ossicular chain. It provides useful information about middle ear effusions that may not be obvious clinically, such as an effusion behind a thick or opacified tympanic membrane that impairs otoscopic evaluation of the middle ear. Tympanometry is done to determine the presence of persistent, abnormally high or low middle ear pressures in patients with complaints of aural fullness. It is valuable in differentiating between abnormally fixed or interrupted ossicular chains behind an intact tympanic membrane. In patients with rhythmic tinnitus or clicking sensations in their ears, tympanometry is used to determine if the tympanic membrane is being contracted abnormally by clonic middle ear muscle contractions.

The acoustic reflex, or stapedius muscle reflex, is a bilateral response that occurs in response to loud sounds. In normal ears the reflex occurs at 70- to 100-dB hearing level (HL) for pure-tone signals (

4). Clinical measurements include reflex threshold, the lowest sound level that elicits the reflex, and reflex decay. Both contralateral and ipsilateral reflexes can be measured, thereby testing the entire reflex arc, including eighth nerve, low brainstem, and facial nerve pathways. Acoustic reflex testing is ordered when lesions of these structures are suspected, such as in acoustic neuromas and brainstem infarctions, and to help determine the site of involvement of facial nerve disorders.

Auditory Brainstem Response

As one of several groups of auditory evoked potentials, the auditory brainstem response does not directly measure hearing but does measure a process that is highly related to hearing sensitivity. The ABR measures electrical activity of the auditory nerve and auditory pathway to the mid-brainstem level. The ABR offers the advantages of being easy to measure in children and adults. It is sensitive to both auditory and neurologic disorders but does not require a behavioral response from the patient.

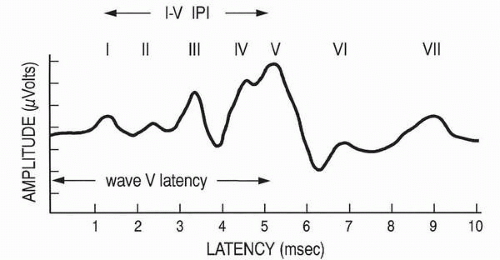

The ABR consists of a series of five to seven waves with latencies between 1 and 10 msec following stimulus presentation (

Fig. 3.2). Waves I and II are generated by the peripheral auditory nerve, and waves III, IV, and V are generally related to the cochlear nucleus, superior olivary complex, and nuclei of the lateral lemniscus, respectively (

5). Clicks are the best stimuli to elicit the ABR because of their abrupt onset and broad frequency spectrum. The click-evoked ABR provides information about the basal end of the cochlea and is associated with hearing sensitivity in the 2,000 to 4,000 Hz region. More frequency-specific information may be obtained with tone bursts, filtered clicks, and masking techniques.

Clinical interpretation of the ABR is based on measurements of wave latencies, interpeak intervals (IPI), and wave V threshold (see

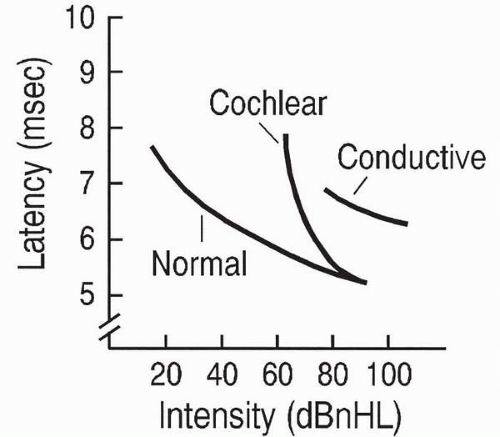

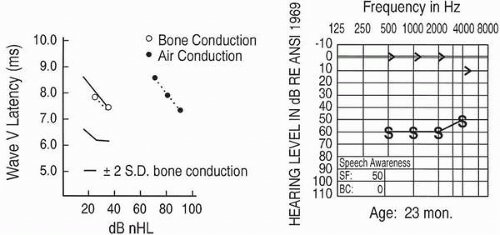

Fig. 3.2). The

wave V latency-intensity function refers to the increase in wave V latency as stimulus intensity decreases. Changes in the shape of the latency-intensity function and in the amount that it deviates from the expected normal function are used to predict type and degree of hearing losses. Patterns associated with conductive

hearing loss and with high-frequency cochlear hearing loss are shown in

Figure 3.3.

The clinical applications of the ABR are varied. The procedure plays an important role in determining auditory thresholds of young infants and other difficult-to-test patients. It is useful in detecting the presence of lesions in the auditory pathway, such as in acoustic neuroma or multiple sclerosis. It also is valuable for intraoperative monitoring of the integrity of the ear and auditory pathway during intracranial surgery near these structures. Automated versions of the ABR are used in newborn hearing screening.

Evoked Otoacoustic Emissions

Evoked otoacoustic emissions are sounds recorded in the ear canal that are associated with normal cochlear function. They do not measure hearing sensitivity directly, but their presence is highly related to normal outer hair cell activity. Although they may occur spontaneously, the most common clinical application is to measure the emissions in response to an evoking stimulus. Transient evoked otoacoustic emissions (TEOAEs), which occur after the presentation of a brief stimulus such as a click, typically are measured if hearing thresholds do not exceed 30-dB HL. Distortion product otoacoustic emissions (DPOAEs) are produced by the ear in response to two simultaneous pure-tones. They are present if hearing thresholds do not exceed 50 dB HL (

6). The middle ear system must be normal in order for EOAEs to be measured.

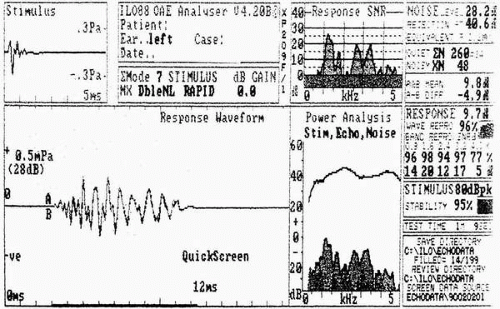

Clinical interpretation of EOAEs is based on amplitude of the emission at a specific frequency or across a frequency range. To be accepted as a response, the EOAE must have good replicability and must exceed the noise floor measured at the time of testing.

Figure 3.4 shows a normal TEOAE response from an adult.

The measurement of EOAEs has many clinical applications, including differentiation between sensory and neural hearing loss, monitoring of cochlear function of patients treated with ototoxic drugs, tinnitus evaluation, and monitoring of noise-induced hearing loss. EOAEs are essential to the audiologic evaluation of young infants and other difficult-to-test patients. They are used extensively in newborn hearing screening.

Get Clinical Tree app for offline access

Get Clinical Tree app for offline access